MicroZed Chronicles: Fixed-Point Math

- Adam Taylor

- Mar 8, 2023

- 4 min read

Updated: Jun 25, 2025

One of the first articles I ever wrote was about how FPGAs perform fixed-point math. Understanding this is important because FPGA-based mathematics is used in a range of different applications from image and signal processing to robotics and control systems to motor control.

When it comes to implementing math with a FPGA, we are going to be leveraging elements such as the DSP48. This allows us to perform multiplication and accumulation and gives us the ability to implement a wide range of mathematical functions when coupled with registers and Configurable Logic Blocks.

Most implementation of FPGA math uses fixed-point mathematics. Fixed-point math enables a much simpler implementation which is optimal for performance and power.

A fixed-point number will have the decimal point at a fixed location in the vector. To the left of the decimal point is the integer element while the fractional element is to the right. This means that we may need to use several registers to accurately quantize the number. However, registers are normally plentiful in FPGAs.

By contrast, a floating-point number can store a much wider range than in a fixed-register width (e.g., 32-bits). The formatting of the register is split between sign, exponent, and mantissa, and the decimal point can float, thereby enabling a value far more than the 2^32-1 when used as a straight 32-bit register.

However, implementing fixed-point math in programmable logic has several advantages and is much less complex to implement. This enables a faster solution when implemented in logic since a fixed-point solution uses significantly less resources which enables easier routing and therefore increased performance.

Important to note is that there are rules and nomenclatures used when working with fixed-point math. One of the first and most important is how engineers describe the location of the decimal point within the vector. One of the most used formats is the Q format (quantized format in its long form). Q format is presented as a Qx where x is the number of fractional bits in the word. For example, Q8 would mean the decimal point is located between the 8th and 9th registers.

How we determine the number of necessary fractional elements depends on the accuracy required. For example, if we wanted to store the number 1.4530986319x10-4 using Q format, we would need to determine the number of fractional bits necessary.

If we wanted to use 16 fractional bits, we multiply the number by 63356 (2^16) and this would result in a value of 9.523023. This value can be stored easily within registers; however, its accuracy is not very good as 9/65536 = 1.37329101563x10-4 as we cannot store the fractional elements. This leads to a loss of accuracy which might impact the end results.

Instead of using 16 fractional bits, we could use 28 fractional bits which would result in the value of 39006 being stored in the fractional registers. This gives much more accurate quantized results.

With the basics of quantization understood, the next step is to understand the rules regarding the alignment of the decimal points for the mathematical operations. We will not get the correct result if we perform operations and the decimal points are not aligned.

With the basics of quantization understood, the next step is to understand the rules regarding the alignment of the decimal points for the mathematical operations. We will not get the correct result if we perform operations and the decimal points are not aligned.

Addition – Decimal points must be aligned

Subtraction – Decimal Points must be aligned

Division – Decimal points must be aligned

Multiplication – Decimal points do not need to be aligned

We also need to consider the impact of the operation on the resultant vector. Failure to consider the size of the result might result in the overflow. The table below shows the rules for the sizing of the results.

This would give in the following cases assuming we have two vectors (one 16 bit another 8 bit):

C (16 downto 0) = A (15 downto 0) + B (7 downto 0)

C (16 downto 0) = A (15 downto 0) - B (7 downto 0)

C (22 downto 0) = A (15 downto 0) * B (7 downto 0)

C (8 downto -1) = A (15 downto 0) / B (7 downto 0)

When it comes to the -1 above, this reflects an increase in the size of the register for the fractional element. Depending on which type is being used, this might be 8 downto -1 if VHDL fixed-point package is used or 9 downto 0 with Q1 otherwise.

The ability to work with fixed-point math is very important and hopefully this blog has provided a basic introduction to the concept. In the next blog, we will take a look at a more in-depth example before evaluating some advanced techniques to easily implement more complex structures in our programmable logic designs.

Workshops and Webinars

Upcoming webinar - Introduction to Vivado 30th March Enjoy the blog why not take a look at the free webinars, workshops and training courses we have created over the years. Highlights include

Ultra96, MiniZed & ZU1 three day course looking at HW, SW and Petalinux

Arty Z7-20 Class looking at HW, SW and Petalinux

Mastering MicroBlaze learn how to create MicroBlaze solutions

HLS Hero Workshop learn how to create High Level Synthesis based solutions

Embedded System Book

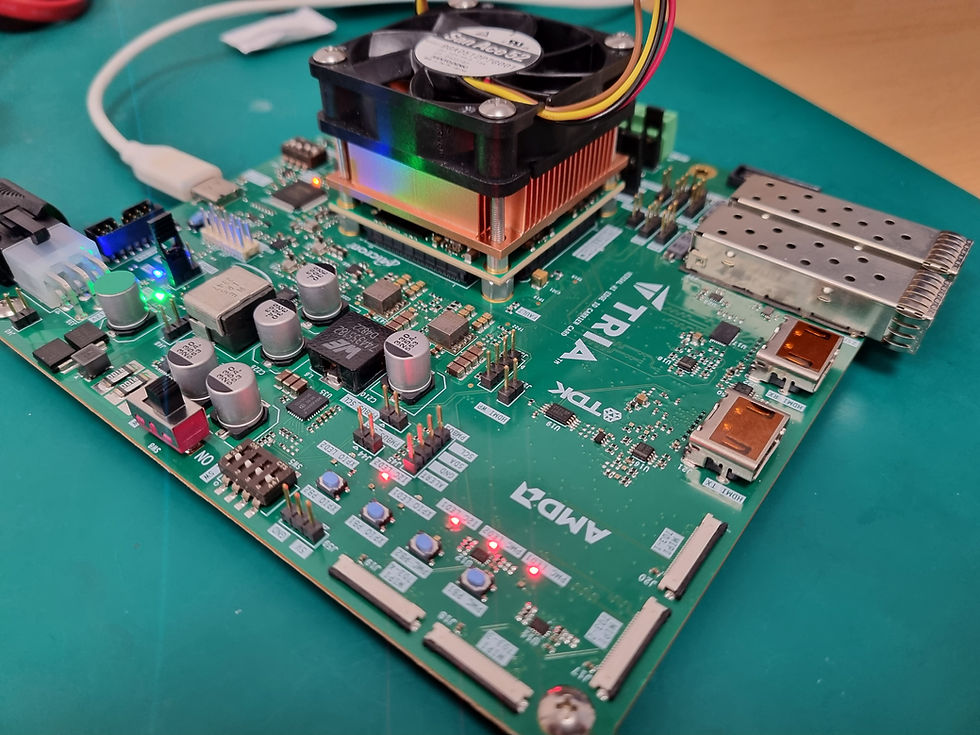

Do you want to know more about designing embedded systems from scratch? Check out our book on creating embedded systems. This book will walk you through all the stages of requirements, architecture, component selection, schematics, layout, and FPGA / software design.

We designed and manufactured the board at the heart of the book! The schematics and layout are available in Altium here

Learn more about the board (see previous blogs on Bring up, DDR validation, USB, Sensors) and view the schematics here.

Order here

Sponsored by AMD Xilinx