MicroZed Chronicles: PYNQ Composable Overlays

- Sep 8, 2021

- 3 min read

Updated: Jun 27, 2025

I have talked about my love of PYNQ for rapid prototyping of applications both commercially and for fun projects on several occasions in the past. There are times, however, when we want to modify the overlay. That, of course, means we need to build the overlay. A good example is when we are working with an image processing algorithm and would like to change the order of processing blocks or switch one out for another.

To address this problem for image processing applications, the PYNQ team recently released the composable video overlay. This overlay provides the developer with the ability to change the image processing pipeline without the need to implement the overlay in Vivado. The overlay uses a novel approach to provide this capability.

The ability to compose the pipeline is provided using an AXI stream switch. This allows data to be routed arbitrarily from one port to another depending upon how it is instructed to route the data. Secondly, the composable overlay has four DFX regions to enable dynamic loading of functions. In addition, six static functions are connected to the AXIS switch and implemented outside the DFX region.

Of course, there is also a software API that supports control of the pipeline from PYNQ.

Here are the static and dynamic functions implemented in the composable overlay.

Several of the DFX functions require the use of both PR_1 and PR_0 when implemented. You can find more information on the DFX regions here.

Installing the composable overlay is pretty simple. At the moment, the PYNQ Z2 is supported for this application.

Enter the following commands in a terminal window.

git clone https://github.com/Xilinx/PYNQ_Composable_Pipeline

pip install PYNQ_Composable_Pipeline/

pynq-get-notebooks composable-pipeline -p $PYNQ_JUPYTER_NOTEBOOKS

Once the overlays have been installed, you will see a new notebook directory beneath. This will contain several notebooks that explain how the composable overlay works.

At the very basic level, we get started loading the composable overlay using the new package Composable_pipeline. This allows us to download the composable overlay. Once loaded, we can explore the dictionary along with the loaded and unloaded functions. By default, of course, we will only see the static functions.

The important part of the overlay is in the composing of the pipeline. This is very simple and is implemented by the creation of a list. For example, [a,b,c,d] and [a,b,[[c,d],[e]],f,g] would result in the following structures.

A -> B - > C -> D

And

/ -> C -> D ->\

A -> B -> -> F -> G

\->E----------->/

Of course, in the composable pipeline, A,B,C,D etc. are replaced by the names of the desired image processing functions.

Once we have created the list for example

video_pipeline = [video_in_in, lut, video_in_out]We can compose the video pipeline and the video displayed on the monitor will be changed to reflect the new pipeline.

cpipe.compose(video_pipeline)Creating pipelines in this manner is actually very simple. For this example, I had a 720P HDMI source and a HDMI display as the sync.

The image will be passed through at start-up by running the introduction notebook. The displayed image changes as the pipeline is composed and loaded.

One of the really cool things about the pipeline is that it can be visualized using the graph attribute.

We can also inspect outputs from different stages of the composable pipeline using the tap attribute. This can be useful for working with the image processing chain and determining if each step of the pipeline is doing what you would expect.

I must say, I really like this idea and will explore the creation of a custom pipeline for an application in an upcoming blog.

Up Coming Book!

Do you want to know more about designing embedded systems from scratch? Check out our upcoming book on creating embedded systems. This book will walk you through all the stages of requirements, architecture, component selection, schematics, layout, and FPGA / software design.

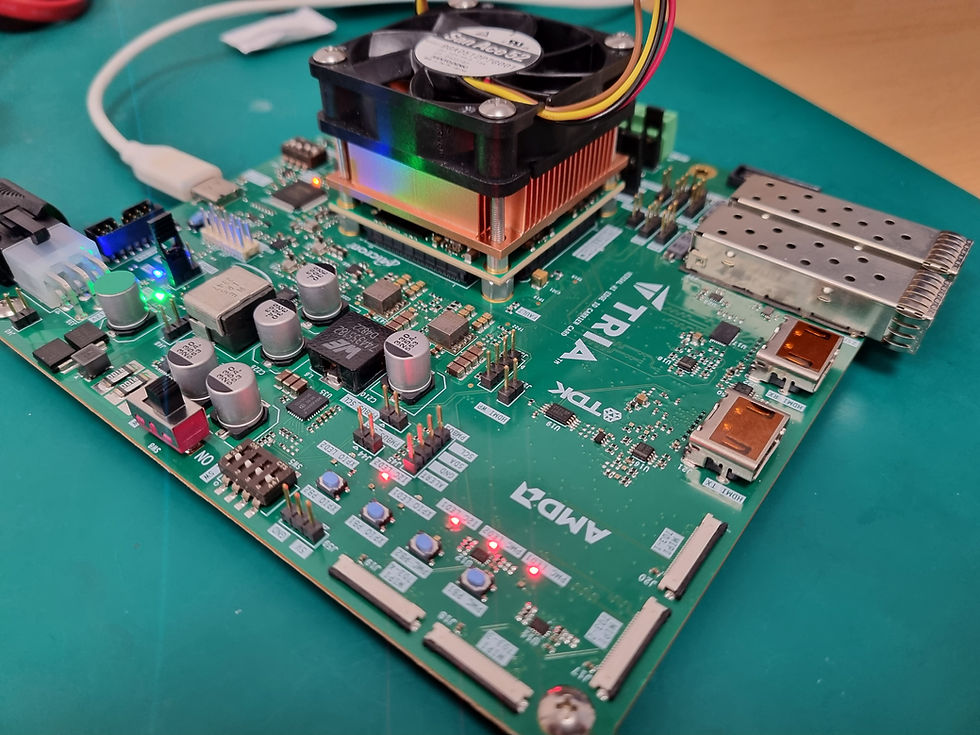

We designed and manufactured the board at the heart of the book!

Learn more about the board (see previous blogs on Bring up, DDR validation, USB, Sensors) and view the schematics here.

See the schematics, the layout will be made available on a new website soon!